A modular, GPU-accelerated architecture designed to handle complex AI workloads with speed, efficiency, and reliability across video and voice.

Throughput

84.2 GB/s

Compute Load

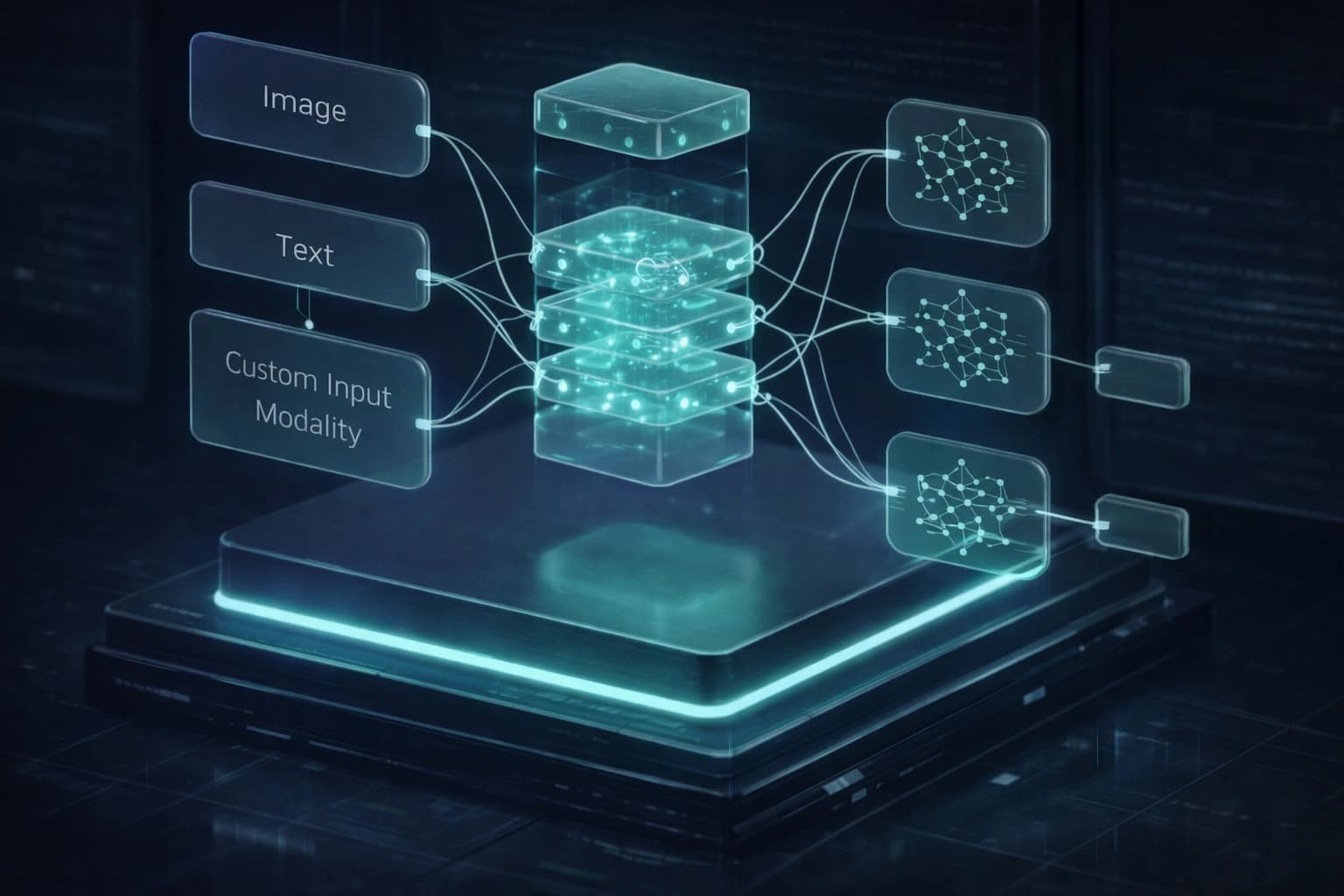

82%KashVelly's AI architecture is built to support end-to-end AI processing, from data ingestion to model execution and output delivery.

The system combines scalable infrastructure, optimized data pipelines, and advanced AI models to ensure high performance and consistent results.

With a modular design, the architecture adapts to different workloads, enabling efficient processing across a wide range of AI applications.

Collect and process input data efficiently across multiple entry points.

Prepare and structure raw data for neural network consumption.

Run enterprise-grade AI models with high-throughput hardware acceleration.

Deliver high-fidelity results via low-latency distribution channels.

Built for Reliability & Efficiency

Flexible components designed for highly diverse AI workloads.

Handles exponential growth without any performance degradation.

Optimized pipelines for real-time inference applications.

Reliable performance with enterprise-grade minimal downtime.

High-Availability Mode

Global Compute Load

Throughput_94.2%

The architecture leveragesGPU accelerationand parallel compute systems to ensure fast execution and efficient resource utilization.

KashVelly's architecture is designed to scale dynamically. Whether handling focused tasks or enterprise operations, the system ensures consistent performance.

Node_01

Active

Node_02

Active

Node_03

Active

Node_04

Active

The architecture supports integration withexternal systems, APIs, and developer tools for total customization.

import { KashVelly } from '@kv/core';

// Initialize integration pipeline

const client = new KashVelly({

apiKey: process.env.KV_KEY,

mode: 'extensible'

});

await client.connectExternal({

endpoint: 'https://api.system.io'

});

Scalable clusters for LLMs and Diffusion models.

High-speed GPU pipelines for real-time rendering.

Real-time generative audio and TTS inference.

Intelligent handling of massive secure enterprise datasets.

Optimized edge processing for instant responses.

Leverage a high-performance architecture designed for modern AI workloads and deploy applications with speed, security, and enterprise-grade reliability.

Latency

< 20ms

Uptime

99.99%

Scale

Unlimited